Blog Archives

Deciphering the searchpartyd macOS process and its impacts

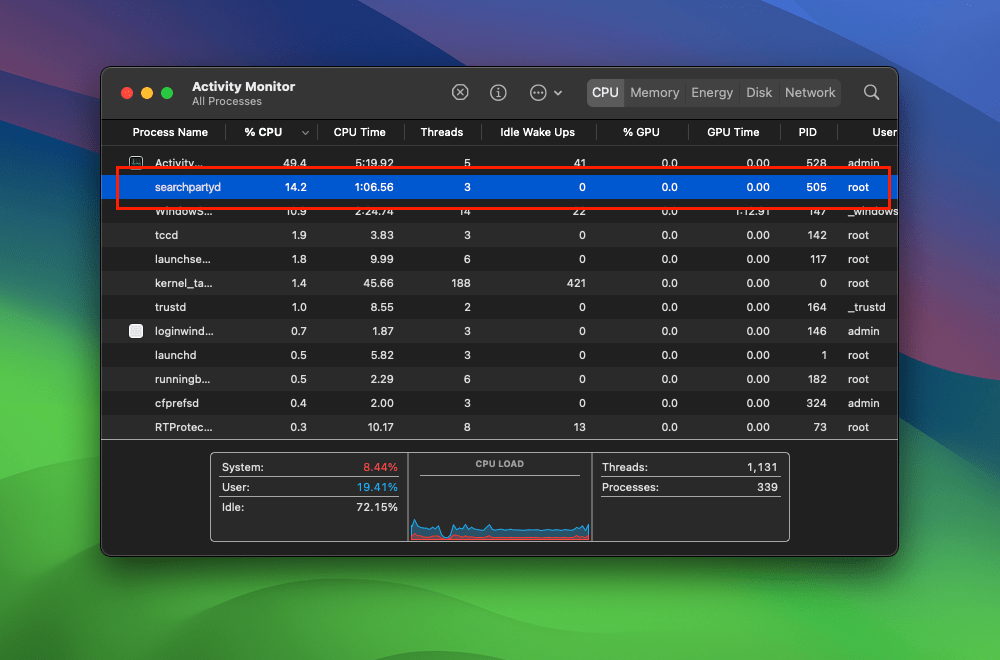

The searchpartyd process in macOS is an integral part of Apple’s innovative location tracking system, introduced with macOS 10.15 Catalina, iOS 13, and iPadOS 13.1. This daemon is a cornerstone of the Find My service, enabling users to locate their devices, even when offline. Understanding searchpartyd, its functionality, and addressing common issues like high CPU usage is crucial for macOS users.

The Integral role and functionality of searchpartyd

At its core, searchpartyd serves as a major daemon within the Offline Finding (OF) system of the Find My app. Its primary function is to generate the necessary cryptographic keys and perform all related cryptographic operations. This process is vital for synchronizing keys, sending location reports as a finder device, and obtaining location reports for devices owned by the user.

When a device equipped with the Find My feature is lost, it emits Bluetooth Low Energy (BLE) signals containing a public key. These signals are picked up by finder devices, which then use the key to encrypt the location of the lost device and send this information back to Apple’s servers. The Find My app accesses these reports to help users locate their missing devices.

The dual nature of searchpartyd process

Although the authentic searchpartyd process is an integral component of macOS’s security and geolocation functionalities, it is crucial for users to remain vigilant against potentially intrusive programs (PIPs) that could disguise themselves with analogous nomenclature. Such deceptive applications have the capability to alter web browser configurations, leading to unwarranted redirects and the proliferation of diverse forms of online advertisements. This activity not only disrupts user experience but also contributes to a noticeable reduction in the Mac’s operational efficiency.

Addressing high CPU usage and management concerns

A frequently reported issue among macOS users is the high CPU usage associated with searchpartyd. This can lead to problems like overheating and rapid battery depletion. Despite some misconceptions, searchpartyd is not a form of malware or virus but an authentic and essential part of macOS. However, users have limited control over this process due to its protected status within the operating system. Tools like EtreCheck are invaluable in identifying applications that may be causing excessive CPU usage by searchpartyd.

exploring sshd-keygen-wrapper on Mac

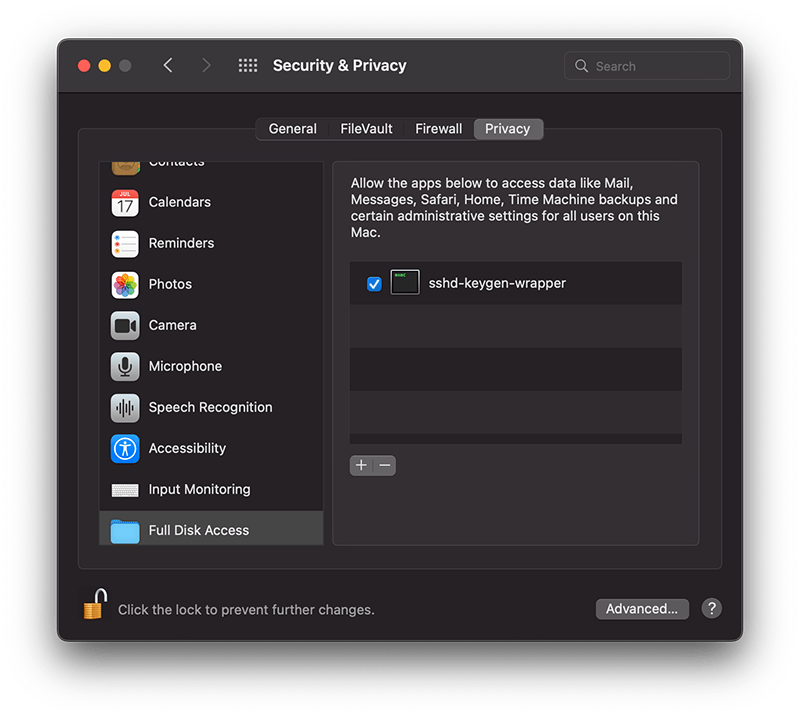

The sshd-keygen-wrapper tool, located within macOS Privacy settings, has garnered attention from users, particularly when they discover it in the Full Disk Access section of their Privacy preferences. While its presence might be disconcerting to some, a deeper understanding of its purpose and functionality can dispel any concerns.

The inclusion of sshd-keygen-wrapper in the Full Disk Access section can be perplexing. Some users may interpret it as an indication of a security compromise or malware. However, the reality is that sshd-keygen-wrapper is an integral component of macOS, functioning as an SSH secure shell key generator. Its primary role is to facilitate users in enabling or disabling remote access to their Mac via the Secure Shell Protocol (SSH).

The visibility of sshd-keygen-wrapper in Full Disk Access correlates with the Remote Login setting. Users who have never activated Remote Login will not encounter this tool. But for those who have, the tool will be present, albeit disabled by default, indicating that its access and permissions are inactive.

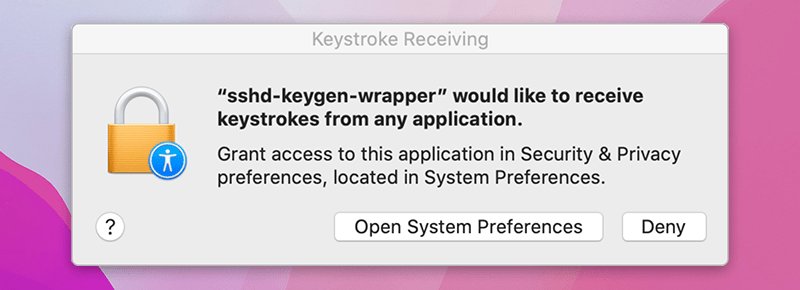

While navigating the Privacy settings on a Mac, users might come across a popup message stating, “‘sshd-keygen-wrapper’ would like to receive keystrokes from any application.” This message can be particularly perplexing, leading to concerns about the tool’s intentions and whether it poses any security risks.

Is it malware?

A prevalent misconception is associating sshd-keygen-wrapper with malware or unwanted software. Contrary to this belief, the tool is an authentic part of macOS and poses no malicious intent. Its placement in the Full Disk Access section is inherently tied to the SSH remote access feature. Activating SSH remote access from System Preferences ensures the association of sshd-keygen-wrapper, which then guarantees the generation of secure SSH shell keys for remote connections.

Should sshd-keygen-wrapper be granted Full Disk Access?

A frequently posed question revolves around whether sshd-keygen-wrapper should receive full disk access. Users contemplating remote access to their Mac via SSH might consider this option. By granting Full Disk Access to sshd-keygen-wrapper, macOS inherently extends the same privilege to SSH. As a result, any individual accessing the Mac through SSH can access all data, encompassing emails, messages, and files. The choice to activate this feature should stem from individual security assessments and requirements.

wifi display – simple network awareness

Ever wished you could see at a glance whether your network has changed without having to click on the Wifi icon in the Status bar to check the currently active connection? I know I have, particularly when toting the laptop between work, home and coffee shop.

Although you can require admin approval for changing networks in System Preferences, in practice that can often be quite disruptive. It also has the potential to expose your login password in public places or situations where it might be awkward or inconvenient to insist on privacy while you type it in.

It would be easier, it seemed to me, if I could just always see the name of the currently connected network in the Status bar, instead of having to actively go and look to see if it has changed.

I decided to solve the problem by writing my own little Wifi Display utility, which I’m sharing here for free for anyone that has a similar need.

The Wifi Display.app simply displays the currently active SSID Wifi name in the Status bar. You can command-drag the Wifi name along the Status bar to move it next to your Wifi icon for visual contiguity. The app is sandboxed and signed with my Apple developer ID.

Wifi Display is free to use and requires macOS 10.10 Yosemite or higher.

Share and enjoy! 🙂

SIP’s soft underbelly – a hiding place for malware?

There’s no doubt that System Integrity Protection has helped keep macOS more secure since its introduction in 10.11, and it continues to see updates that restrict what can be modified and where non-system files can be stored.

Apple’s official, user-facing documentation says:

Unfortunately, this documentation leaves out an important part of the story. The full list of protected paths and process labels can be found in a bunch of related files in the Sandbox folder within System/Library.

Among these are a list of protected locations in the rootless.conf file. The file, however, tells a little more than Apple’s user-facing documentation. Not only does it list the locations that can’t be modified, it also lists some that can. Despite what Apple officially says, not everything in System, it turns out, is in fact protected by SIP.

We can use a quick-one liner on the command line to output the exceptions on the current system like so:

awk '$1 ~ /^\*/' /System/Library/Sandbox/rootless.conf

On my 10.13.6 system that returns 9 locations, four of which are within the System’s Library folder:

Let’s check to see if these paths are really writable. We’ll create a simple script that, when run, produces a dialog box showing where the script is located. We first create the script in the /tmp folder, give it executable permissions, then move it into the System Library’s ‘Speech’ folder. We can do all this on the command line in Terminal, then execute it:

Sure enough, our test produces a script showing that it’s running out of one of the locations listed as an exception in rootless.conf.

This, of course, isn’t a SIP vulnerability. The paths we’re talking about are listed as exceptions to SIP protection, after all; what’s more, they do indeed require administrator privileges to write to (although not to run). The issue is that very few users will know that these paths are exceptions. In fact, aside from their being written in rootless.conf, there may be no other place where they are all documented, at least not at the user level. And that obscurity, of course, means many will have no idea that malware can install itself in places in the System folder where, for sure, most users will fear to tread.

Moreover, even if the user were to notice these paths in a process output or list of open files in Activity Monitor, it would be very easy to overlook them as being legitimate since they would all begin with the path ‘/System/Library/…’. Naturally, we assume the System’s folder is reserved for system files, not the user’s and not third-party applications’ either. Apple’s user-facing documentation that we referred to earlier encourages this very assumption.

What does it all mean?

In this post we’ve seen that there are places in the System folder that could easily be adopted as a nice hiding place for malware which has acquired elevated privileges. The aim here was to make these exceptions a little less obscure and to encourage people – especially those troubleshooting macOS for malware and adware issues – to add these locations to their list of places to keep an eye on.

Enjoy!😃

accessing TCC.db without privileges

Earlier this year, Digita Security’s Patrick Wardle took apart a cross-platform backdoor trojan he nicknamed ”ColdRoot’. Wardle was retro-hunting possible malware by searching for apps on VirusTotal that access Apple’s TCC privacy database.

For those unfamiliar, TCC.db is the database that backs the System Preferences > Security & Privacy | Accessibility preferences pane and which controls, among other things, whether applications are allowed access to the Mac’s Accessibility features. Of interest from a security angle is that one of the permissions an app with access to Accessibility can gain is the ability to simulate user clicks, such as clicking “OK” and similar buttons in authorisation dialogs.

One particular comment in Wardle’s article caught my eye:

there is no legitimate or benign reason why non-Apple code should ever reference [the TCC.db] file!

While in general that is probably true, there is at least one good reason I can think of why a legitimate app might reference that file: reading the TCC.db used to be the easiest way to programmatically retrieve the list of apps that are allowed Accessibility privileges, and one reason why a piece of software might well want to do that is if it’s a piece of security software like DetectX Swift.

If your aim is to inform unsuspecting users of any changes or oddities in the list (such as adware, malware or just sneaky apps that want to backdoor you for their own ends), then reading TCC.db directly is the best way to get that information.

Just call me ‘root’

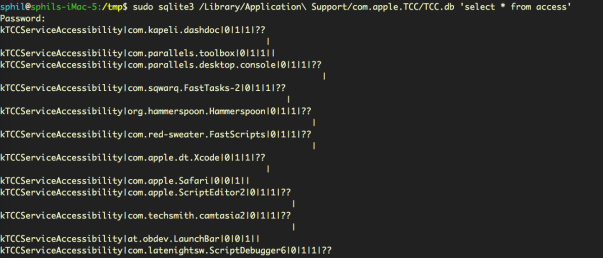

Since Apple put TCC.db under SIP protection subsequent to my reports on Dropbox’s user-unfriendly behaviour, apps are no longer able to write to the database via SQL injection. An app with elevated privileges can, however, still read the database. A sufficiently privileged app (or user) can output the current list with:

sudo sqlite3 /Library/Application\ Support/com.apple.TCC/TCC.db 'select * from access'

On my machine, this indicates that there are twelve applications in the System Preferences’ Privacy pane, all of which are enabled save for two, namely LaunchBar and Safari:

We can see from the output that LaunchBar and Safari both have the ‘allowed’ integer set to ‘0’ (the middle of the three values in “0|0|1”) , whereas all the other apps have it set to ‘1’ (“0|1|1”).

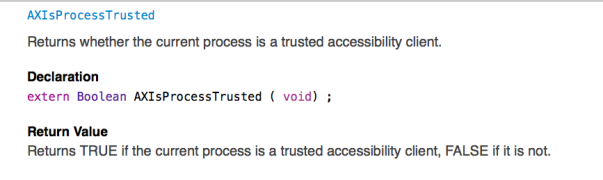

It’s not clear to me why reading the database should require privileges. Certainly, Apple have provided APIs by which developers can test to see if their own apps are included in the database or not. The AXIsProcessTrusted() global function, for example, will return whether a calling process is a trusted accessibility client (see AXUIElement.h for related functions):

However, there remains a case (as I will demonstrate shortly) where developers may well need to know whether apps other than their own are, or are not, in the list. Moreover, there doesn’t seem to be any obvious vulnerability in allowing read access to that data, just so long as the write protection remains, as it does, in place.

The use case for being able to read the TCC.db database is clearly demonstrated by apps like DetectX Swift: security apps that conform to the principle of least privilege, always a good maxim to follow whenever practical. Asking users to grant elevated privileges just to check which apps are in Accessibility is akin to opening the bank vault in order to do an employee head count. Surely, it would be more secure to be able to determine who, if anyone, is in the vault without having to actually take the risk of unlocking the door.

Did they put a CCTV in there?

Without direct access to TCC.db, we might wonder whether there are any other less obvious ways by which we can determine which apps are able to access Accessibility features. There are three possibilities for keeping an eye on bad actors trying to exploit Accessibility without acquiring elevated privileges ourselves, each of which has some drawbacks.

1. Authorisation dialogs

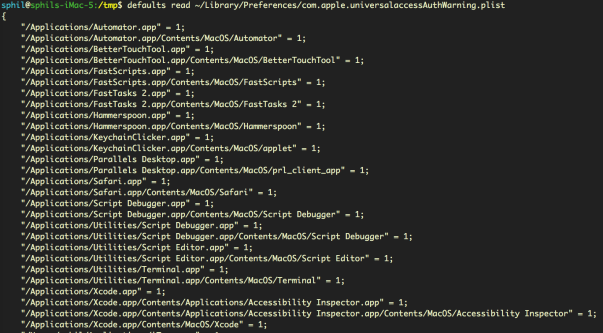

The first is that we can read the property list that records all invocations of the Accessibility authorisation dialog, without admin rights:

defaults read ~/Library/Preferences/com.apple.universalaccessAuthWarning.plist

That gives us a list of all the apps that macOS has ever thrown the ‘some.app would like permission to control your computer’ dialog alert for, along with an indication of the user’s response (1= they opened sys prefs, 0= they hit Deny).

This list could prove useful for identifying adware installers that try to trick users into allowing them into Accessibility (looking at you, PDFPronto and friends), but it’s main drawback is that the list is historical and doesn’t indicate the current denizens of Accessibility. It doesn’t tell us whether the apps in the list are currently approved or not, only that each app listed once presented the user with the option of opening System Preferences and what the user chose to do about it at that time.

2. Distributed Notifications

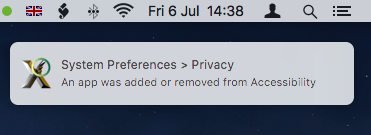

The second option is that developers can register their apps to receive notifications when any other application gets added or removed from the list with code similar to this:

DistributedNotificationCenter.default().addObserver(self, selector: #selector(self.accessibilityChanged), name: NSNotification.Name.init("com.apple.accessibility.api"), object: nil)

This ability was in fact added to DetectX Swift in version 1.04.

However, Apple hasn’t made this API particularly useful. Although we don’t need elevated privileges to call it, the NSNotification returned doesn’t contain a userInfo dictionary – the part in Apple’s notification class that provides specific information about a notification event. All that the notification provides is news that some change occurred, but whether that was an addition or a deletion, and which app it refers to, is not revealed:

Even so, notification of the change is at least something we can present to the user. Alas, this is only useful if the app that’s receiving the notification is actually running at the time the change occurs. For an on-demand search tool like DetectX Swift, which is only active when the user launches it, the notification is quite likely to be missed.

It would be nice, at least, if Apple would provide a more useful notification or an API for developers wishing to keep their users safe and informed. The lack of a userInfo dictionary in the notification was apparently reported as a bug to Apple several years back, but I suppose it could always use a dupe.

3. AppleScript – everyone’s favourite ‘Swiss Army Knife’

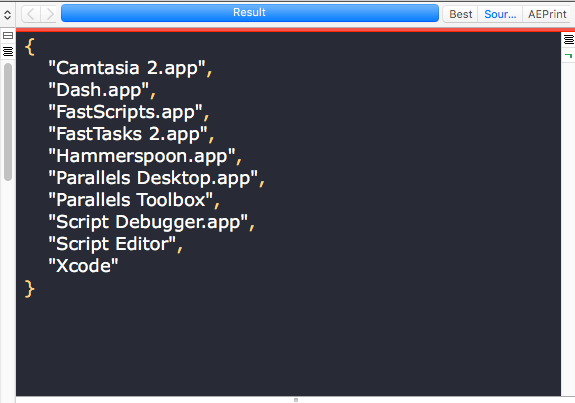

There is, as it turns out, a third way to reliably get exactly all the info we need about who has access to Accessibility. We can use AppleScript to return a complete list of apps in Accessibility that are enabled. Note that the output of this unprivileged script, shown here in the results pane of Script Debugger, returns a more readable version of the same list of apps we obtained from the privileged sqlite3 query of TCC.db, minus Safari and LaunchBar, which as we previously noted were not enabled:

Fantastic! There’s just one problem. While this AppleScript does not require blanket elevated privileges to run – in short, it doesn’t require an administrator password – it does need to be run by an app that is itself already in the list of Accessibility apps. If you have a script runner like Apple’s Script Editor, or third-party tools like Script Debugger or FastScripts, already approved in Accessibility, then you can run it without authorisation. It’s also worth noting that the script relies on launching and quitting System Preferences, which it attempts to do as quietly as possible.

As for DetectX Swift, I may consider adding something like this to a future version as an option for users who are happy to add DetectX to the list of apps in Accessibility.

Enjoy! 🙂

Have your own tips about accessing TCC.db? Let us know in the Comments!

Featured pic: Can’t STOP Me by smilejustbcuz

defending against EvilOSX, a python RAT with a twist in its tail

EvilOSX is a malware project hosted on GitHub that offers attackers a highly customisable and extensible attack tool that will work on both past and present versions of macOS. The project can be downloaded by anyone and, should that person choose, be used to compromise the Macs of others.

What particularly interested me about this project was how the customisation afforded to the attacker (i.e., anyone who downloads and builds the project, then deploys it against someone else) makes it difficult for security software like my own DetectX Swift to accurately track it down when it’s installed on a victim’s machine.

In this post we’ll explore EvilOSX’s capabilities, customisations, and detection signatures. We’ll see that our ability to effectively detect EvilOSX will depend very much on the skill of the attacker and the determination of the defender.

For low-skilled attackers, we can predict a reasonably high success rate. However, attacker’s with more advanced programming skills that are able to customise EvilOSX’s source code to avoid detection are going to present a bigger problem. Specifically, they’re going to put defenders in an awkward position where they will have to balance successful detection rates against the risk of increasing false positives.

We’ll conclude the discussion by looking at ways that individuals can choose for themselves how to balance that particular scale.

What is it?

EvilOSX is best described as a RAT. The appropriately named acronym stands for remote access trojan, which in human language means a program that can be used to spy on a computer user by accessing things like the computer’s webcam, microphone, and screenshot utility, and by downloading personal files without the victim’s knowledge. It may or may not have the ability to acquire the user’s password, but in general it can be assumed that a RAT will have at least the same access to files on the machine as the login user that has been compromised.

Whether EvilOSX is intentionally malicious or ‘an educational tool’ is very much a matter of perspective. Genuine malware authors are primarily in the business of making money, and the fact that EvilOSX (the name is a bit of a giveaway) is there for anyone to use (or abuse) without obvious financial benefit to the author is arguably a strong argument for the latter. What isn’t in doubt, however, is that the software can be readily used for malicious purposes. Irresponsible to publish such code? Maybe. Malicious? Like all weapons, that depends on who’s wielding it. And as I intimated in the opening section, exactly how damaging this software can be will very much depend on the intentions and skills of the person ‘behind the wheel’.

How does it work?

When an attacker decides to use EvilOSX, they basically build a new executable on their own system from the downloaded project, and then find a way – through social engineering or exploiting some other vulnerability – to run that executable on the target’s system.

There is no ‘zero-day’ here, and out of the box EvilOSX doesn’t provide a dropper to infect a user’s machine. That means everybody already has a first line of defence against a malicious attacker with this tool: Prudent browsing and careful analysis of anything you download, especially in terms of investigating what a downloaded item installs when you run it (DetectX’s History function is specifically designed to help you with this).

EvilOSX doesn’t need to be run with elevated privileges, however, nor does the attacker need to compromise the user’s password. As intimated earlier, it’ll run with whatever privileges the current user has (but, alas, that is often Admin for many Mac users). All the attacker needs to do is to convince the victim to download something that looks innocuous and run it.

Once run, the malicious file will set up the malware’s persistence mechanism (by default, a user Launch Agent) and executable (the default is in the user’s ~/Library/Containers folder) and then delete itself, thus making it harder to discover after the fact how the infection occurred.

After successful installation, the attacker can now remotely connect to the infected machine whenever both the client (i.e., victim) and server (i.e., attacker) are online.

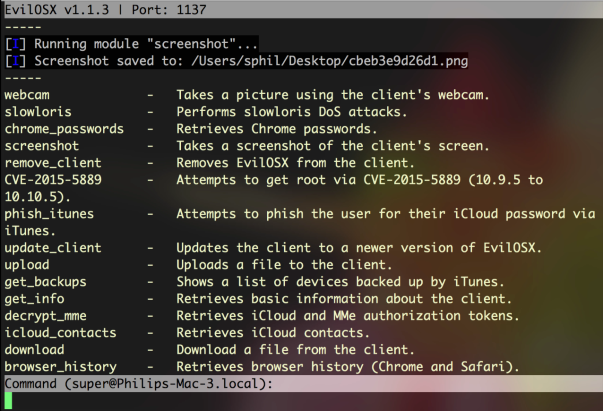

Once the attacker has surreptitiously connected to the client, there are a number of options, including webcam, screenshots, and downloading and exfiltrating browser history.

In my tests, some of the modules shown in the above image didn’t work, but the webcam, screenshots, browser history and the ability to download files from the victim’s machine were all fully functional.

Customisation options

By default, EvilOSX will offer the attacker the option of making a LaunchAgent with a custom name – literally, anything the attacker wants to invent, or to use the default com.apple.EvilOSX.

That in itself isn’t a problem for DetectX Swift, which examines all Launch Agents and their program arguments regardless of the actual filename. The malware also offers the option to not install a Launch Agent at all. Again, DetectX Swift will still look for the malware even if there’s no Launch Agent, but more on this in the final sections below.

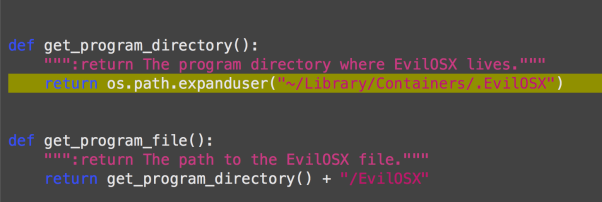

If configured, the malware installs the Launch Agent and, by default, points it to run a binary located at ~/Library/Containers/.EvilOSX. There’s no option for changing this in the set up routine itself, but the path to the program argument is easily modified if the attacker is willing to do some basic editing of the source code.

Making matters even more difficult is that with a little know-how, the attacker could easily adapt EvilOSX to not use a Launch Agent at all and to use one of a variety of other persistence methods available on OSX like cron jobs, at jobs and one or two others that are not widely known. I’ll forego giving a complete rundown of them all here, but for those interested in learning more about it, try Jason Bradley’s OS X Incident Response: Scripting and Analysis for a good intro.

String pattern detection

Faced with unknown file names in unknown locations, how does an on-demand security tool like DetectX Swift go about ensuring this kind of threat doesn’t get past its detector search? Let’s start to answer that by looking at the attack code that runs on the victim’s machine.

We can see what the attack code is going to look like before it’s built from examining this part of the source code:

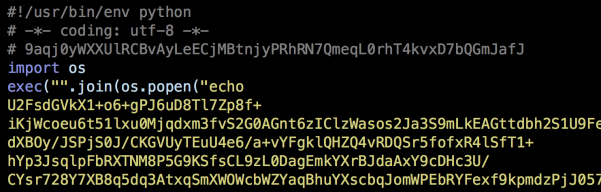

As the image above shows, the structure and contents of the file are determined by the output_file.write commands. Before exploring those, lets just take a look at what the finished file looks like. Here’s the start of the file:

and here’s the final lines:

Notice how the first four lines of the executable match up with the first four output_file.write commands. There’s a little leeway here for an attacker to make some customisations. The first line is required because, as noted by the developer, changing that will effectively nullify the ability of the Launch Agent to run the attack code. Line 4, or some version of it, is also pretty indispensable, as the malware is going to need functions from Python’s os module in order to run a lot of its own commands. Line 3, however, is more easily customised. Note in particular that the output_file.write instruction defines how long the random key shall be: between 10 and 69 (inclusive) characters long. One doesn’t have to be much of an expert to see how easy it would be to change those values.

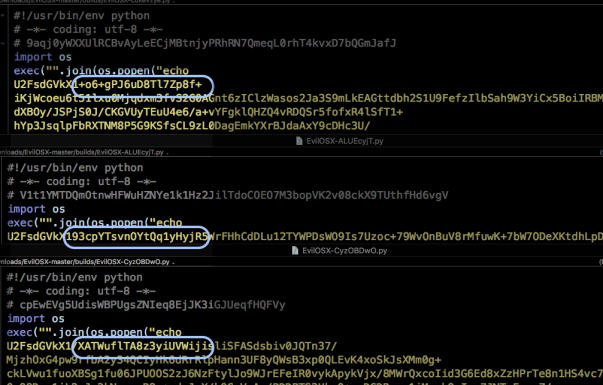

Line 5 in the executable is where things get really interesting, both for attacker and defender. As it is, that line contains the entire attack code, encrypted into gibberish by first encoding the raw python code in base64 and then encrypting it with AES256. That will be random for each build, based on the random key written at Line 3. We can see this in the next image, which shows the encrypted code from three different builds. Everything from the highlighted box onwards to the last 100 or so characters of the script are random.

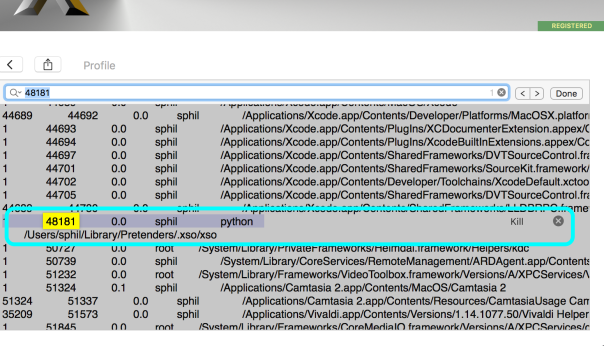

However, as one of my favourite 80s pop songs goes, some things change, some stay the same. The first thing that we can note, as defenders, is that when this code is running on a victim’s machine, we’re going to see it in the output of ps. If you want to try it on your own machine, run this from the command line (aka in the Terminal.app):

ps -axo ppid,pid,command | grep python | grep -v python

That will return anything running on your Mac with python in the command or command arguments.

Of course, the victim (and yourself!) may well have legitimate Python programs running. To limit our hits, we can run the file command on each result from ps and see what it returns. Our attack code, being a single, heavily encrypted and extremely long line in the region of 30,000 characters, will return this indicator:

file: Python script text executable, ASCII text, with very long lines

That still isn’t going to be unique, but the test will futher narrow down our list of candidates. We can then use string pattern detection on the remaining suspects to see which contain the following plain text items,

import osexec("".join(os.popen("echo -md sha256 | base64 --decode")We could arguably even include this:

U2FsdGVkX1which occurs immediately after echo, but for reasons I’m about to explain, that might not be a good idea. Still, from the default source code provided by the developer, if we find all of those indicators in the same file, we can be reasonably certain of a match (in truth, there’s a couple of other indicators that I haven’t mentioned here in order to keep DetectX Swift one-step ahead of the attackers).

Unfortunately for defenders, the attacker has a few workarounds available to them for defeating string pattern detection. To begin with, the attacker could adapt the code to use something other than base64, or indeed nothing at all. Similary, AES256 isn’t the only option for encryption. For these reasons,we can’t assume that we’ll find something like U2FsdGVkX1 in the malicious file. Then, there’s the original source code’s use of the long-deprecated os.popen. That is an odd choice to start with, and someone with a bit of experience in Python would be able to rewrite that line to avoid the telling indicators.

Skill level and customisation options

Advanced detection options

At this point you may be feeling that the attacker holds all the cards, and to a certain extent that is true, but there are some positive takeaways. First, we can be fairly sure of catching the neophyte hackers (aka “script kiddies”) with little to no programming experience who are trying to hack their friends, school or random strangers on the internet. The motivation to adapt the code is probably not going to be there for a large number of people just doing it 4 the lulz.

Secondly, depending on your tolerance for investigating false positives, and as I’ll explain how below, if you needed to be super vigilant, you could simply check on every python executable running on your Mac which file identifies as having ‘very long lines’. For sure, there are legitimate programs doing that, but the number still isn’t going to be that high on any given machine, and the paths to those legit programs are going to be readily identifiable. If security is of overriding importance, then it’s not much inconvenience, and time well spent.

By default, DetectX Swift will find instances of EvilOSX running on a mac when it’s used out of the box, and when its used with a modified launch agent and executable path. It will also still find it when the attacker has made certain alterations to the source code. However, a determined attacker who chooses to rewrite the source code specifically to avoid string pattern detection is always going to be one-step ahead of our heuristics.

We are not out of options though. You can still use DetectX Swift combined with the Terminal.app as a means to making custom detections as mentioned above. Here’s how:

- Launch DetectX Swift and allow it to search for the variations of EvilOSX it knows about. If nothing is returned, go into the Profile view.

- Click inside the dynamic profiler view, and press Command-F and type python into the search field.

- If there are no hits in the Running Processes section, you don’t have EvilOSX running on your machine.

- If there are any hits within the Running Processes section, make a note of each one’s command file path by selecting it in the view and pressing Command-C to copy it.

- Switch to the Terminal app, type

file(with a space) and Command-V to paste. If the path has any spaces in it, surround it in single quotes. Then press return. - If the path doesn’t come back with ‘very long lines’, the file isn’t EvilOSX.

- If it does, hit the up arrow on the keyboard to put the previous command back at the prompt, use Control-A to move the cursor to the beginning of the line, and replace the word

filewithcat(if you’re familiar withVior similar command line text editors use one of those instead). Hit return. - Does the file end with

readlines()))? - Use command and the up arrow to go back up to the beginning of the file. How close does the file look to matching what you’ve seen here? Look for variations like

import * from osandimport subprocess. - Consider the path that you pasted in. Is it something that looks like it belongs to a genuine program, or is it a completely unfamiliar? Anything that points to ~/Library and isn’t contained within a recognized application named folder should warrant further investigation.

Inspect the output from cat with the following in mind:

You’ll need to consider carefully the answers to 8, 9, & 10, with an emphasis on the latter, for each python file you tested to make an assessment. If you’re in any doubt, contact us here at Sqwarq and we’ll be glad to take a look at it and confirm one way or the other.

Conclusion

EvilOSX is just one of an increasing number of Python RAT projects that are appearing on the internet. It’s not particularly sophisticated, and this is both a strength and a weakness. With modest programming skills, an attacker can modify the source code to increase the chances of evading automated detections. However, vigilant users can still identify EvilOSX if they know what to look for, as explained in the preceding sections of this post, or by contacting Sqwarq support for free advice.

Stay safe, folks! 🙂

What’s the difference between DetectX and DetectX Swift?

Since releasing DetectX Swift back in January, a lot of people have been asking me how the new ‘Swift’ version differs from the older one, aside from requiring 10.11 or higher (the original will run on 10.7 or higher).

Well sure, it’s written in Swift — and it’s much swifter, literally, but of course there’s a lot more to it than that.

I’ve finally had a spare moment to enumerate the feature list and create a comparison chart. Although the image above is essentially the same as the one you’ll see at the link address at the moment, there’s still a bunch of features to be added as we go through development of version 1. Thus, be sure to check the latest version of the chart to get the most up-to-date info.

Of course, if you have any questions drop me a comment below, or email me either at Sqwarq or here at Applehelpwriter.

Enjoy 🙂

how High Sierra updater leaves behind a security vulnerability

Some time shortly after the release of High Sierra public betas last year, I started noticing a lot of user reports on Apple Support Communities that included something odd: an Apple Launch Daemon called com.apple.installer.cleanupinstaller.plist appeared, but oddly its program argument, a binary located at /macOS Install Data/Locked Files/cleanup_installer was missing.

An ‘etrecheck’ report on ASC

Being an Apple Launch Daemon, of course, the cleanupinstaller.plist is owned by root:

-rw-r--r-- 1 root wheel 446 Oct 10 06:52 com.apple.installer.cleanupinstaller.plist

After discussion with a few colleagues about this oddity, I decided to see if I could catch a copy of the missing program argument. After rolling back to an earlier version first, I found that the macOS Install Data folder is created when a user runs the Upgrade installer (along with the Launch Daemon plist). A clean install with the full installer does not appear to create either the properly list or the program argument.

The Locked Files folder indicated in the program argument path is hidden in the Finder, but revealed in Terminal.

Inside the Locked Files folder is the cleanup_installer binary. The binary is 23kb, and the strings section contains the following, giving some indication of its purpose:

Upon a successful upgrade, the /macOS Install Data/ folder is removed, but the Launch Daemon is not, and therein lies the problem.

Let’s have a look at the plist:

The ‘LaunchOnlyOnce’ and ‘RunAtLoad’ keys tell us the program argument will be run just once on every reboot. It’ll execute whatever is at the program argument path with root privileges. With the executable missing as noted in numerous ASC reports, that leaves open the possibility that a malicious process could install its own executable at the path to aid in persistence or re-infection if the original infection were to be discovered or removed.

To test this hypothesis, I threw a quick script together that included a ‘sudo’ command.

#! /bin/bash

sudo launchctl list > /Users/phil/Desktop/securityhole.txt

The legacy command ‘launchctl list’ produces different results when it’s run with sudo and when it’s not. Without sudo, it’ll just list the launchd jobs running in the user’s domain. With sudo prepended, however, it’ll instead list the launchd jobs running in the system domain. This makes it easy for us to tell from the output of our script whether the job ran with privileges or not.

Having created my script, I created the path at /macOS Install Data/Locked Files/ and saved the script there as ‘cleanup_installer’. It’s worth pointing out that writing to this path requires admin privileges itself, so this issue doesn’t present any kind of ‘zero day’ possibility. The attacker needs to have a foothold in the system already for the danger to be real, so I’ll repeat that the vulnerability here is the possibilty of the attacker hiding a very subtle root persistence mechanism within a legitimate Apple Launch Daemon, making it all the more difficult to detect or remediate if otherwise unknown.

The final step was to chmod my script to make it executable, and then restart the mac. Sure enough, after reboot and without any other intervention from myself, the script was executed and my Desktop contained a text file with a nice list of all the system launchd jobs!

Of course, that’s a trivial script, but here’s the tl;dr:

Anything – including code to reinstall malware – can be executed with root privs from that path every time a High Sierra install containing the Apple

cleanupinstaller.plistreboots.

Remediation

If you’re already beyond your second reboot since updating and your /LaunchDaemons folder contains this property list, the obvious thing to do is to remove it (as High Sierra should have done when it completed the reinstall). It appears to serve no purpose once the program argument has been removed, other than to offer a way for malware to seek persistence.

Secondly, you should be able to safely remove the /macOS Install Data/ folder if you find that exists. This is usually removed after a successful update, but it can also be left behind if a user cancels out of an update half way through. If you do find this still lurking on your system, you can check that it is what it’s supposed to be by copying and pasting this into Terminal:

strings -a /macOS\ Install\ Data/Locked\ Files/cleanup_installer

and confirm you get the same or similar as listed earlier in this post. On my system here, the file also gives a checksum of 945203103c7f41fc8a1b853f80fc01fb81a8b3a8. You can produce that on the command line with:

shasum -a 1 /macOS\ Install\ Data/Locked\ Files/cleanup_installer

However, it’s entirely possible that Apple either already have or may in the future make changes to that binary since I captured it, so a varying checksum alone should be treated with caution.

Of course, even after having removed these items, there’s nothing to stop an attacker that’s already compromised a machine from recreating both of those (as indeed, there’s nothing to stop a privileged attacker creating anything else on your system!). Thus, it’s always a good idea to keep track of what changes occur on your system on a regular basis. My free/shareware tools DetectX and DetectX Swift are designed to do exactly this. In DetectX, after running a search, the log drawer will tell you if the /macOS Install Data/ exists:

NOTES:

1. This issue was reported to Apple Product Security in August 2017.

how to remove MyCouponize adware

MyCouponize is an aggressive adware infection that simultaneously installs itself in Safari, Chrome and Firefox, It hijacks the user’s search and page loads, redirecting them to multiple web sites that advertise scamware and other unwanted junk.

You can remove it easily with DetectX Swift (a free/shareware utility written by myself) as shown in this video. If you prefer reading to watching, here’s the procedure:

1. Run the search in DetectX.

2. Click on the [X] button.

You’ll find this button just above the results table to the right, between the search count and the tick (whitelist) button. It will turn red when you hover over it. When it does so, click it.

Then hit ‘Delete’ to remove all the associated items.

You’ll need to enter a password as some of the items are outside of your user folder.

Press the esc key or click the ‘Cancel’ button on any pop up dialogs that appear.

3. Go to the Profiler

Here we’ll unload the launchd processes that belong to MyCouponize.

Navigate to the user launchd processes section and move the cursor over the item com.MyMacUpdater.agent

Click the ‘Remove x’ button that appears when the line is highlighted.

Wait for the profiler to refresh and then go back to the same section and remove the second process called com.MyCouponize.agent

4. Quit the mediaDownloader.app

This item has already been deleted in step 1, but its process may still be running in memory. If its icon appears in the Dock, right click on it and choose ‘Quit’ from the menu.

4. Finally, go to Safari Preferences’ Extensions tab

Click the uninstall button to remove the MyCouponize extension.

After that, Safari should be in good working order. If you have Chrome, Firefox or possibly other browsers installed, make sure you remove the extensions or Add Ons from those, too.

DetectX and DetectX Swift are shareware and can be used without payment, so go grab yourself a copy over at sqwarq.com.